So I did a talk at the South West Test meetup in Bristol last week (at the time of writing first draft, probably last year by the time I finish…) and someone asked afterwards if I could make it into a blog post.

I was a bit like yeah sure, but also a bit like humm because I have already made posts about how I did some of the stuff (basic visual regression test writing) but they are kinda dry/boring and also hard to follow because I wrote them after the fact and had forgotten how I got from one thing to the next. They also don’t contain any context or human factors, and were generally not meant for actual people to read. Sort of more a log for me, of things I have done, using examples unrelated to my actual work and thus two or three steps back from what I was working on at any given time.

The talk was about how to find the thing you want to automate, and how to get there as a new or long-time manual tester with noone ‘above’ you in experience or knowledge for guidance. What do you automate? How do you do it? Automation is a big word right now, and because of that it feels like some huge alien thing lurking beyond the horizon, untouchable to a simple n00b like you and me. Even when you’ve read articles or watched videos about it and you think you know what you want to do, how do you get from that “I want to write this automated test” to actually having one written? And what do you do with it afterwards? Where does it go, how does it run?

I’m not saying I have all the answers. Or the best answers for any of the questions. There’s plenty of better testers than I who have courses and articles about it, so I’ll keep this to a simple purpose: to show you, ie me one year ago when I’d been a tester only a couple of weeks, that it is possible to go from 0 dev or test knowledge to doing cool things. This is just about my journey, and how I went about it.

Also of interest might be – how did Bruce end up doing a talk in the first place? Well, it came down to some pretty intense negotiatons with the meetup organiser, a lot of back-and-forth, editing notes etc. Countless messages, I tell you.

You can see a play-by-play of the incredibly difficult conversation below:

It’s surprisingly easy to do a talk if you want to, so long as you go to meetups and talk to other humanoids. Many meetups are always on the hunt for more interesting and diverse talks, and I genuinely think that everyone has something valuable to contribute to the community – so you should definitely be one of those people.

If you’re in Bristol, then you should come to the South West Test meetups – and if you’re in Bristol and you already go to them, then make sure you come say hi. And tell me your name, even if we’ve met before ten times. I don’t do names very well xD

Yes, I referred to myself as a legend. You can’t stop me. It’s branding now. I might even update this blog and my twitter name and everything. I am Bruce the Legend.

The talk actually started with me telling the story of why I called myself a legend. It wasn’t supposed to – people were still wandering over, Danny still having a chat at the back of the room, so I started telling some people at the front about TestBash. And then suddenly everyone in the room was quiet and listening. I was like – oh okay, I guess this is my talk now.

I did let Danny take the stage back for just a minute to officially introduce the meetup though.

So I haven’t really talked about where I work on this blog before, mostly because when I started it I thought my dad might find it, and wouldn’t approve of me working for a company that makes ads for the gambling industry. (I was right. They were so disappointed in me when they found out, that I still sometimes cry thinking about it.)((Shh, I’m a people-pleaser, I live for the happiness and acceptance of others!))

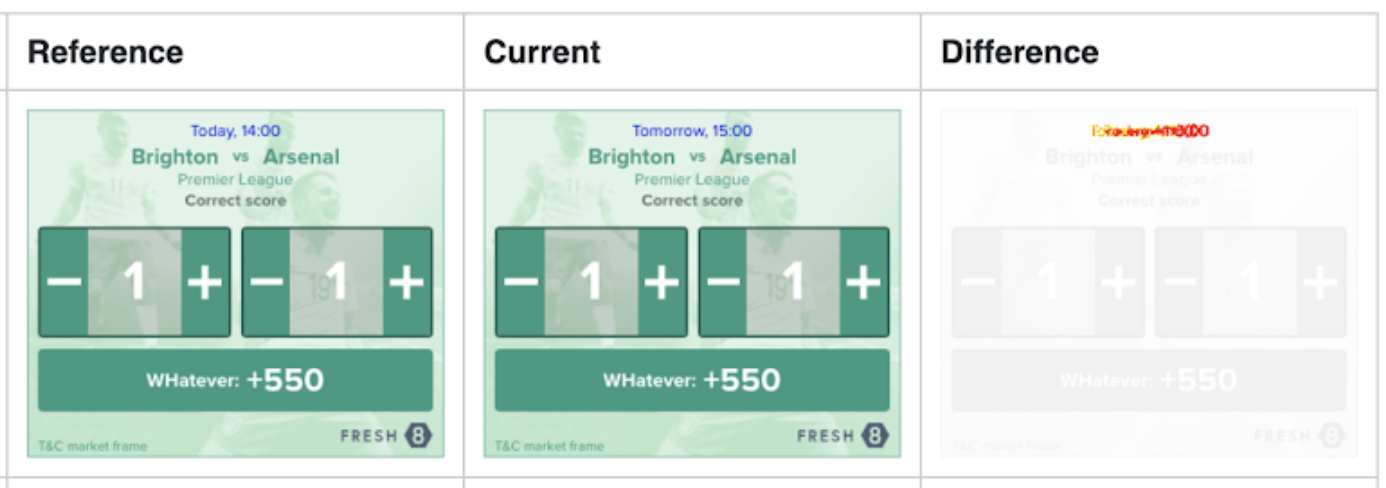

Just so we’re all up to speed, Fresh8 Gaming makes online dynamic, contextualised ads for the gambling industry. If you see an ad that has live odds in it, then it’s probably one of ours. I test those ads, as well as some of the surrounding stuff like a console UI (when the other QA is on holiday), and related tools/services.

I didn’t go into this slide much during the talk, due to the length restriction (5-10 minutes, before questions) but I can give a bit more info here – what is the first step you need to take on this road? What do you need to prepare? What do you need to know? How do you decide what test to automate first? So many questions.

When people talk about automation, they often talk about these big systems with a billion tests run on spun-up environments with xyz bells and whistles, this or that management tool etc etc. And new testers can just stare up at these glowing, holy fountains of glorious knowledge and expertise, and drop to our knees and pray. Cos that would be about as effective as anything we could possibly do to emulate that.

Now, I’m talking from my experience of being one of two QAs in a small company, neither of whom had previous dev or test knowledge before joining, and there being little by way of specific guidance. If you have a senior QA or test manager with experience in automation, or you have experience yourself in implementing the type of cool system described above, then this is definitely not aimed at you.

Q: I’ve been trying to learn <enter language here> but I keep getting stuck. How do I get to the point where I can write a thing?!

A million and one people came up and asked me this question after the talk.

I totally get it. I’d say “you wouldn’t believe how many times I had to re-learn what objects are and how to write classes because I got stuck and had to restart or change courses” but you would believe it, because it’s what literally everyone who learns javascript on their own says and does. You get to a certain point in these beginner tutorials and you get stuck, so you go back a couple videos and rewatch those to build your foundation a bit. And then something else comes up and you don’t have time, and a few weeks later you can’t remember any of it so you’re basically watching the whole series from the start all over again. Rinse repeat.

I was doing that. For seven or eight months I was ‘learning’ Javascript, going back and forth over the same things and writing the same types of examples along to different beginner courses. In the end, I realised I don’t need to be some coding master who knows off the top of my head the exact syntax required in every single circumstance. Testing tools come with documentation, which tells you the syntax. You just need to know enough to be able to resolve errors. Will you write things in the wrong order, or put a bracket in the wrong place?

Maybe! You don’t know until you write it. My advice is to decide on the test you want to write, decide on a tool that will help you, and then just try to do it. Do the first half of a beginner course like three or four times first, though. I’m not saying you should go into it with 0 knowledge whatsoever.

Q: Ok so I’m supposed to “just do it”, but how do I decide on what test to automate?

This one is a simple question with a multi-faceted answer. Typically, the best things to automate are tasks that are super simple, that you spend ages doing over and over again. Regression tests are usually a good place to start, since you should have a series of simple manual tests/checks that you go through every time you’re testing a new release. If I click this button, does it open the dropdown as usual? Are the correct background images loading in? Basic UI paths or visual components that should look the same every time you load them.

You might have a bunch of ideas for where to start (or if you don’t even know what types of tests exist then here‘s an article that lists and describes some) and are having trouble deciding which to write. It might be a bit cheeky, but you should totally do the one you want to do. I chose visual regression tests not because they were the number one most valuable thing ever my company could possibly have asked me to do, but because out of the vast array of things that we desperately needed, it was the one I was most interested in doing at the time. Watch some videos, read about things and do what interests you. If you have a problem you genuinely want to solve, instead of one you are trying to solve because you kind of feel you should, then you will be a lot more motivated and committed to doing it.

And if you don’t feel that strongly about any, then pick any one. Seriously. Unless you have a specific request or reason to pick one over another, and no one else is making the decision then it doesn’t matter. This isn’t advice any education articles out there will give you, but at this point you’re in it for the learning. If you make something that isn’t perfect, and you realise afterwards that you should have done something different – congrats! You did a learn, and you’re probably in a better place to do that other thing, since you just realised how it wasn’t as hard as you thought to do the first thing.

A note to remember is that not everything needs automating. Things that are complex, with many steps that change all the time, or tests you do only once in a blue moon… You don’t need to automate these things.

Q: I know the thing I want to automate, how do I decide what tool to use?

Argh, I know right? There are so many tools for the same things, and the people who use them each seem to think that whatever they use is the one true tool and the others are all rubbish. Selenium is amazing! Selenium is awful! Have you tried Cypress? Cucumber4lyfe. Blah blah blah.

Don’t be afraid to just try something. All these tools have good basic documentation. Install one, run it locally following the instructions on their site or one of the numerous free youtube tutorials there are bound to be. If you choose one and it turns out to be the wrong thing, well you just spent a couple of hours learning about a new tool. Maybe it will be useful knowledge in the future. Go try something else.

(Note: don’t go crazy, make sure you’re doing stuff that adds value to the company. xD It might also be that you don’t have the level of autonomy required to just go off and do things. In that case, find a thing you want to do, and take it up with your direct leader or your team. Make the case that you think it will add value, free up your time for a wider range of testing tasks and increase reliability and confidence in releases. It’s all about dem business cases.)

For me, the limiting factor was that our ads load inside of an iFrame. That means that instead of loading some elements as part of the web page, it renders an entirely new page in a little box. The contents of that page are an advert, which has its own html document. This is fine, but it gets annoying when you want to use certain tools.

For example, say you write a bit of code that says “wait for the page to load, find this button, click on the button and then eat some cheese”, it will scour the html document of the webpage to find reference to this button. But it won’t find the button, because it’s not part of the webpage. It’s part of a different html document being loaded inside a box on the page. So it will sadly never be able to click the button and collect its delicious cheesy reward. (Don’t know why I used this example, I’m both vegan and lactose intolerant.) There are workarounds, but in general you can’t get full functionality on a lot of test tools when it comes to interacting with elements in an iFrame.

I first tried writing the tests in Gemini, which is built on Selenium. Unfortunately, due to the way it requires you to write your selectors it was just impossible to get it to find things in the iFrame to click on. This is fine for visual regression tests – you don’t need to click on an ad for it to load, you can just screenshot the box it’s in and be done – but I wanted the tests to have coverage of click dropdowns in some of our ads, hover states, and the possibility of extending to other types of test in the same tool.

After watching a video on testing with Puppeteer, I decided to give it a shot – and now, even though I might be going back to a different tool I can say I know how to write tests using Puppeteer. So not a moment of that time was wasted.

(unrelated, but a piece of my tooth just came off! I have a feeling my bank holiday weekend is about to take a turn for the painful)

I don’t really think any details of implementation or code I could go through would be useful for anyone else, so I won’t delve into that. I’ll tell you about my thinking at the time, and the pitfalls I met.

I made a set of basic Visual Regression Tests – that is, a test that takes a screenshot of a webpage and compares it to the ‘golden’ image, aka what it looked like yesterday before we released and broke everything. This can be used to quickly bring up visual bugs introduced into your testing slice, so you don’t have to go to every single page yourself. I chose this because a regression test for me was looking at tens or hundreds of ads to see if anything was accidentally broken by new code. Every release (sometimes multiple times a day), manually, by eye. Now I don’t know about you, but if I’m looking at a squillion bazillion pictures and trying to compare them to how those same squillion bazillion pictures looked yesterday then I’m not going to notice that a button in one of them is three pixels higher than it should be. I’m just not gunna see that, so it will go unnoticed until a few months’ time when there is an ad with an unusually large amount of text, which wraps to a line that includes those three pixels. Now the button is overlapping with the text – oh, if only I had spotted the change that caused this, all that time ago…

I started out by making a new project folder and following the Puppeteer README. It was really easy to just copy and paste the simple example in the docs, enter the url of an ad I wanted into the example and get a screenshot.

OK, so it takes a screenshot. It’s not a test – it doesn’t ascertain whether something is true or false – but it’s the first step. What I needed next was some way of comparing the image created, with one that already exists of the same webpage. I had a root around and found a library for visual regression testing, made to work with Puppeteer. Cool. I added Differencify to the project and again simply added my url into the new example, along with a few additions from the docs.

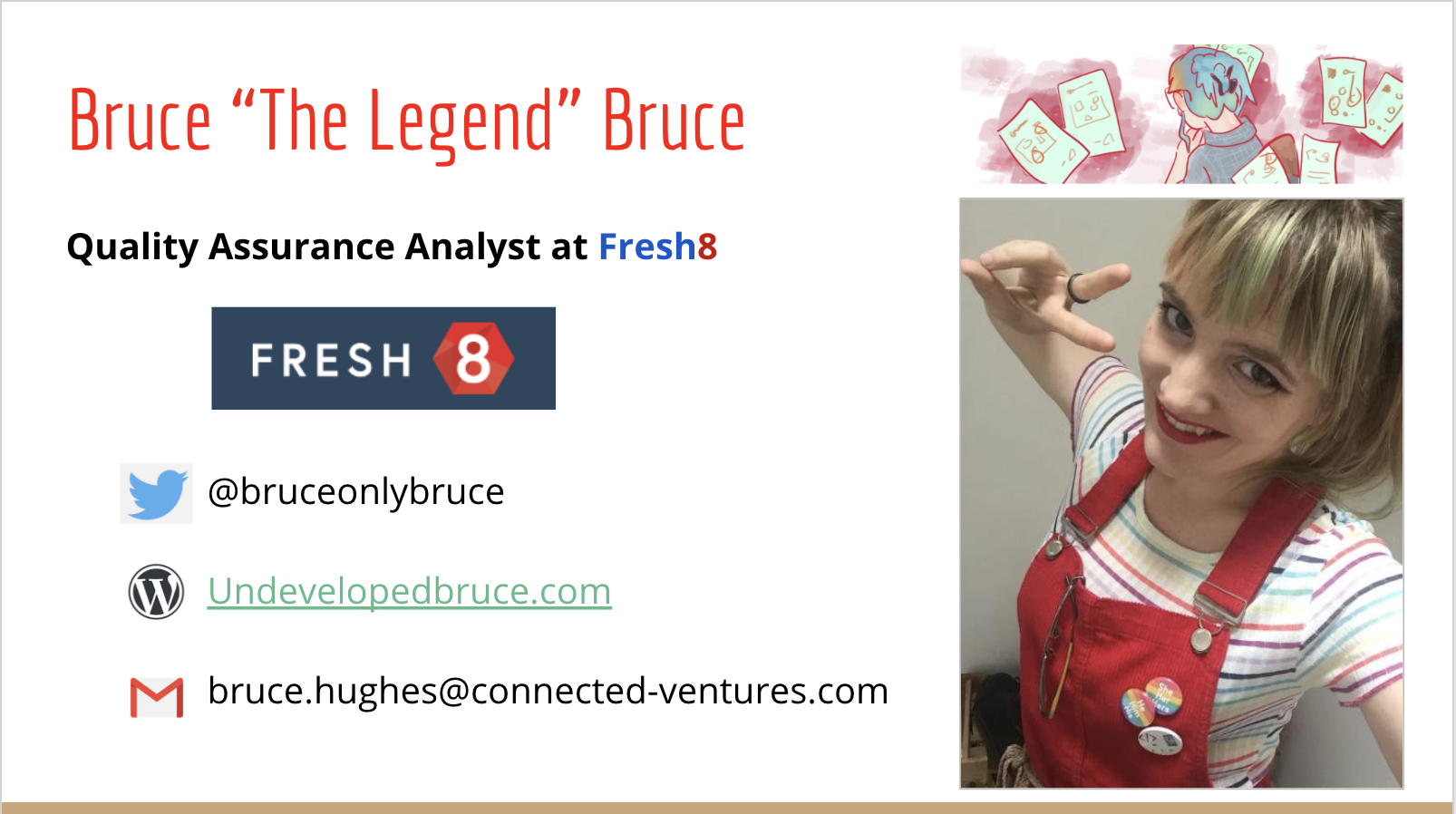

Since the Differencify readme suggested it, I also installed the test manager Jest to the project. This makes it easy to run and see the results of your tests in a nice clear format:

(oh my days, my mouth hurts whyyyy)

I came across a bit of confusion here, since the differencify docs have two types of example – chained, and unchained. Since the original example from the puppeteer docs had been unchained, I carried on with that. But a lot of the more advanced examples in the Differencify library were only written chained so I had to translate them using a brain that did not know how async/await works.

As soon as I started changing things around, adding in lines to make it find elements in the iFrame and save them as constants to be used for clicking or returning values later… I got constant errors saying that there were promises left open, and I had no idea what promises are.

So I went and watched a few videos on what promises are, found that although I could grasp the concept well enough, I didn’t understand the rules of writing it – and asked a developer to help out. Since it was a few days before Christmas and half the team was away, the dev in question had more than enough time to go through the code with me. He hadn’t done much with ES6 (modern) Javascript either, so we kind of muddled through together until it finally ran for the first time!

It saved the picture to a file locally, which I had to find and open to see the screenshot. It also didn’t show the diff image which would highlight things that had changed.

I needed a nice tidy report that would be generated from a template whenever the test was run. I could have written something myself, but there is a Jest reporter for Differencify that already exists so I installed that. There were a few teething problems with it if I recall, things that were small but frustrating until I worked out what was causing them – for example, two tests that had the same name in the test text, causing them to share an image file.

Anyway, this is all too much detail. I now had a test – and copy-pasting that test, changing some details made that into a set of 20 tests that were designed to cover every molecule that we used to build our ads at least once. The thing was – these tests were basically identical, with only a few little differences between them eg the viewport size and url, and the name of the test.

It was a waste to have twenty files all basically doing the same thing, when surely one file could do all of it? What if we made changes to our url structure, or Puppeteer change the format for writing tests? I’d have to go through each of those files and change the same thing twenty times – or more, as the list grew. This list didn’t cover every frame we use, and only used our home brand. What about using other brands? That would mean having potentially hundreds of variations on the same code, all in separate files. I did not relish the thought of trying to maintain or update that.

I’ve done a few bits and bobs of work on the ads myself, just some styling changes and the creation of one responsive ad pre-roll, as part of learning. Gotta know how something is made and how it works, in order to know how to test it – or at least, how to make effective bug reports. So I knew about JSON files, which are basically just lists of objects you can refer to from elsewhere.

Such a file could be a list of brand names, and the corresponding colours that should be added to text on their ads (ours don’t work like this, but it’s an example shh). In the case of my tests, I took each test name and its size, as well as a sport which had to change depending on which type of config was showing, and made a list.

I was pretty proud of this list. It was very… listy… Yup. It sure was a list, that was for certain. I nodded to myself, made a proud pouty face at this incredible list, nodded a bit more.

The idea was, and I knew this in my brain, that I could have one file that looped through each item in the list, put the config text and sport in the test name in the url, and set the viewport size to the size specified in the list… and it would then spin up all those tests for me, run them and hey presto! One file, twenty tests.

I knew it was possible, and probably very easy. I went back to those ol’ Javascript tutorials for some guidance, but they couldn’t help me now. This was an actual project, and I didn’t even know what phrase to search in order to come up with the answers I needed. Googling questions is very difficult, when you don’t have the vocabularly to describe what your problem is. It was like trying to ask why the sky is blue, without knowing the words for “sky” or “blue”. Why is the big up thing the same colour as my right sock? (I just googled this to see what would come up, and the top result was an article called The seven rules of wearing socks. I’m a sock anarchist, so I didn’t read it.)

Since it was now a couple months past Christmas, I talked with my manager and we put some time in the calendar to pair on it the following week. This was not a done-in-a-day project, no matter how simple. I had my actual job to be doing too.

It took us about an hour and a half to do this. Dom was great to pair with, since he kept me actually writing everything, and helped guide me towards answers instead of giving them outright. I’ve put the end result below, and tried to annotate it briefly since I don’t think the details of anything I did are that useful for anyone else but dem peeps might want to see it anyway.

(For clarity of annotation…

Red: points to line that gets the json file that lists all my configs with the correct size and sport, so that the list of objects is available to use in this file

Pink: points to line that makes a variable called ‘brand’, and makes that equal to the third argument of the test command. Dirty way of doing it, but it allows me to run the same test for different brands as required from console.

Purple: points to lines showing that the ‘brand’ is then inserted into a console.log to return which brand the test is being run on, and part of the test name

Dark blue: points to forEach that wraps the test so that the test is run for each item in the json list

Cyan: points to places in the test where options from the objects are used

Green: points to where the function to create a custom url for each test is made and called, using the brand and options from the json list

Applejack: points to the line that screenshots the page

Orange: points to the line that makes this a test, ie does it pass or fail the criteria?)

So that’s where the ground lies now. The images are also stored in a google cloud bucket instead of locally on my machine, so that it can be run by other people and eventually through the CI, but there’s more work to be done on this. Is this the best test I could possibly write to fulfil this function? No.

But it’s the best I knew how to write at the time.

It seemed while making it that the ability to interact with the ads to capture different states would be really important, but after talking with the rest of the team more and really thinking about what type of coverage we need, it would have been better to make simple tests that could be made cross-browser. For that purpose, I’ll probably backtrack and go back to Gemini, since that can be made to work with BrowserStack and we’ll be able to get coverage on a range of desktop and mobile devices.

There are also barriers to continued work on this project, since the json file is just a temporary solution until I can work with the team to connect the tests up to a manifest service that keeps record of every config we have for each brand. We’re reluctant to do this work until we’ve purged the manifest of all unused and duplicate configs, which is in a ticket somewhere near the bottom of the backlog, behind a torrent of client work and tooling.

So it’s a really simple test with limited coverage that I needed a lot of help on towards the end, and will need more help to move forwards with in the future. I’ll tell you what, though. I’m not scared of writing tests any more, nor of learning new languages or tools.

The reason I think it’s so important for new testers to just get stuck in, even if they end up making something of limited use, is because you learn how to actually put things together. How to make a plan, how to research, how to resolve bugs, how to write more than a classifier for types of dogs.

Those are skills you can take and apply to whatever you do next, be it the same type of test but better, or a new tool or language, or a totally different kind of project.

You have to learn to do one thing – doesn’t matter what it is – because what you’re really learning is that it’s possible to learn it. And if it was possible to learn that, what else is possible for you? Then you can be fearless.