I’ve wanted to do some proper sessioned exploratory testing for a while now. It’s not like non-checkbox type testing is foreign to me as part of my day-to-day job, but I’ve never set aside time as an individual or a group to specifically look for scenarios or problems that have previously gone unchartered.

It seems that every time I start trying to plan a session, I get stuck writing the charter because I just want to do the activity for its own sake, and haven’t got a particular question or hypothesis to answer. Just vague ideas like look at analytics or cross-browser test responsive ad sizes more. When it comes to breaking those down and defining a scope and methodology, those statements turn out to be so much air.

And then I found a bug while (unthinkingly exploratory) testing random scenarios related to a new UI we’re developing. Details <redacted> until we’ve fixed the issue, but basically it’s a really weird and somewhat unlikely scenario that only affects a really small number of our internal users.

I brought this up with my direct manager, and then we reached out to the other team’s QA as we thought their UI might also be affected by the same bug. Together, we decided to do an exploratory testing session the following day to see if we could discover the scope of the bug, as well as finding other ways of making it happen.

We have a weekly time set aside called QA-Dojo where Joe (the other QA) and I can call upon anyone else we want (with enough notice) to help with whatever we’re trying to learn. This has included sessions on our system architecture, analytics/tracking, defining our role better and making a slackbot among so many other things.

At the end of the day (before the Dojo), we searched online for an exploratory test charter template, and decided to use this one since it was pretty clearly structured. It only took us about half an hour to fill out, including banter time. I’ve redacted the details, but the general idea of it is below:

Exploratory Testing Charter Purpose: To find ways of reproducing the bug Bruce found

Testers: Joeeeeeeeeeeee and Bruuuuuuuuuuuce and Dooooooooooooom

Date: 04/06/2019

Timebox or Duration: 1.5hr

Target or Scope: We will be exploring the X feature in Y/Z UIs to recreate ABC.

References or Files: <links to UIs and note-taking doc>

Tester Tactics or Tester Charter:

Joe will be approaching the exercise with a logical Black hat using his knowledge of the console system to methodically go through practical and realistic scenarios.

Bruce will be approaching the exercise with the emotional Red hat, using initiative and gut reactions to unmethodically go through spontaneous and unusual scenarios without any justification (my normal testing strategy).

Dom will be approaching the exercise with the creative Green hat, using investigation and outside-the-box creative thinking to unmethodically investigate responses to provocation.

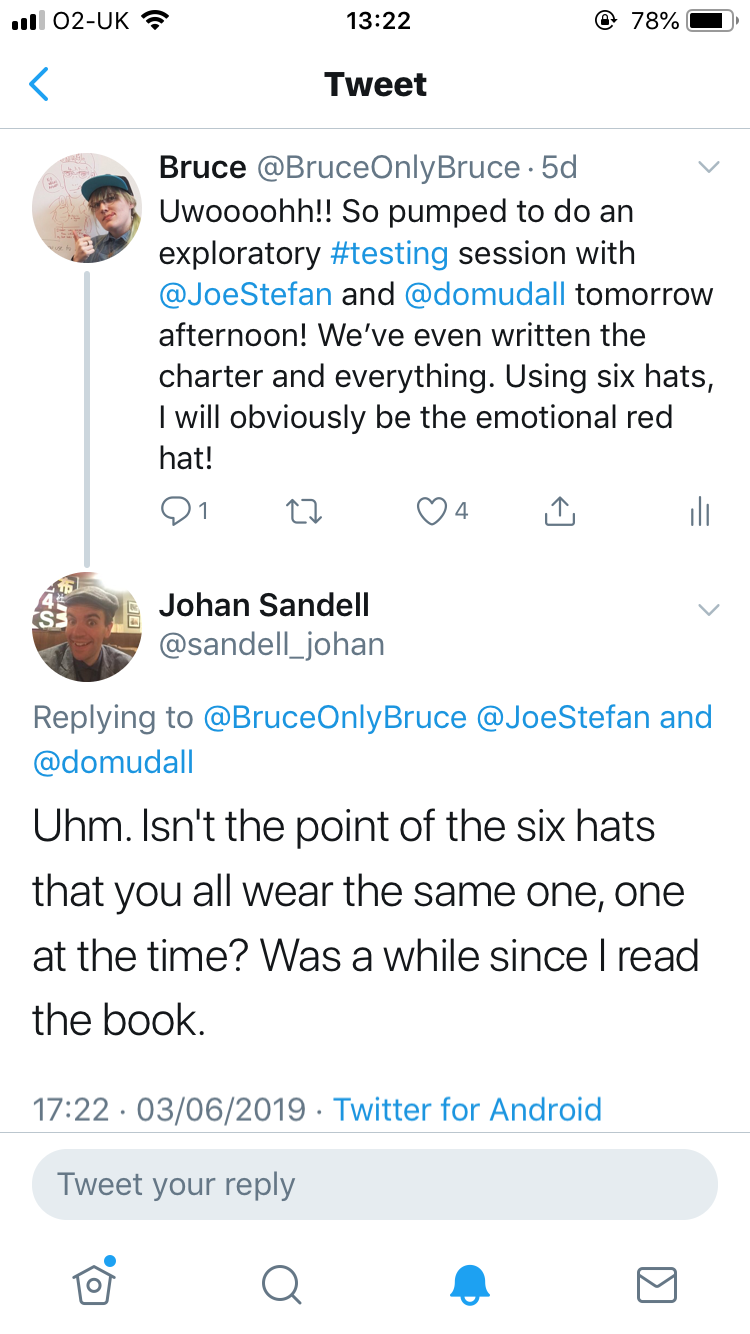

We’d decided to use the Six Thinking Hats method because it was suggested by the article, but we didn’t actually understand it. Thankfully, Johan pointed out the mistake on twitter when I naively posted about how excited I was to be the red hat. “Spontaneous and unusual scenarios without any justification” is my jam, you know.

Hurriedly, I went about finding more information on the technique so that I would no longer look like a total and utter lemon. There are plenty of great resources out there (including the book that started it all) so I’ll just give a quick summary for those who aren’t familiar.

The six thinking hats is a tool for planning. There are six coloured hats, and placing one on your head (literally or metaphorically) means adopting the point of view represented by it. Blue is for planning; red for emotions and instincts; green for creative thinking; black for pessimism; yellow for positivity; and white for analysis of facts and data. You wear one hat at a time, either as an individual or as a group, and can use as few or as many hats as you’d like. You can even go back to hats you’ve previously used, and the blue hat specifically is designed to be worn at the start, middle and end.

I did some searching an hour before the session was due to take place, and found this great post on using the six thinking hats for exploratory testing. We used this as a basis for deciding which hats we would wear in which order, and how we’d define them without being experts on the use of coloured hats (in this context. I am an expert on coloured hats in other contexts, and won an award for “Most eclectic hat collection” in my A-level Biology class, so I’m not a total hat noob). Edward de Bono’s book is definitely on my reading list now though.

The main event

We used the video meeting tool Zoom so that Dom (my direct manager) could work remote while Joe and I were together in the second meeting room (the walls smell strange, which is why people usually use the first meeting room).

The first 10 minutes were spent in deciding which hats we would use in which order (which is in itself is a blue hat activity) as well as how we would dole out responsibilities. We decided that Joe would be sharing his screen to the meeting, and actually doing the physical task of testing since he is most familiar with the UI. I would be in charge of note-taking, as well as keeping us on track, and Dom was the brains of the operation in that he is the only one who knows in any depth how the bug actually works and why it happens.

We ignored the white and red hats (I didn’t cry), and planned to start with yellow (happy paths) then green for weird user behaviours that hadn’t been considered before. Then black for breaking it any way we could without the assumption of good user intentions, including some post requests through postman. We thought this would be a fun note to end on, even though we weren’t really concentrating on malicious or intentional actions, just accidents for the most part.

After <10 minutes of yellow, we donned blue once more to decide whether or not to drop the positive hat. Joe covers the happy paths in his day-to-day regression testing, so although it was somewhat unfamiliar for me, it was hardly exploratory testing when we already knew the answers to any question we could ask.

I enjoyed green hat a lot, since creative out-of-the-box thinking is my speciality, being a weirdo in general. We were able to properly establish the scope of the bug by taking similar user journeys in lots of different ways. The black hat was guided by Dom, who knows best what we needed in the postman requests to play about with things, but nothing really came of it apart from us feeling like a bunch of hackerz:tm:

Reviewing the session

We think the session was a success. We kept in the allotted time slot and followed the process we had planned on. Joe has created tickets for solving the issues we found, although the UI is being rebuilt at the moment so it’s a case of building in safeguards. The scope was supposed to include a list of different resource types, but we forgot and concentrated on just one. In retrospect, I think this was a good way of going about it anyway, as the behaviours should be the same. It might be a place for future investigation though.

When going through the black hat scenarios, we were a bit lost when it came to maliciously trying to break things. We just don’t have the skillset or breadth of knowledge for breaking things very well. From this, we decided while reviewing at the end of the session that we should take a look at the OWASP top 10 security concerns in another session in the future.

We were definitely a good team to do this exercise together, as we each have different specialisms and knowledge that overlapped sufficiently for discussion but was unique enough that we were all able to contribute meaningfully. I think it was also valuable to do, and now that we’ve done one it’ll be easier in the future to plan and lead more with other people in the team. It’s my plan to make a couple more exploratory test charters and invite small groups of developers to do timed exercises, and as time goes on hopefully I can get other people writing the charters and start thinking about this without my interference.

[…] Our first exploratory testing session Written by: UndevelopedBruce […]

LikeLike

[…] Undeveloped Bruce: Our first exploratory testing session […]

LikeLike